Don't just sign the prescription

Your AI agent just handed you a pull request . Before you approve it , do you actually know what's in it ?

“Hey doc , can you sign this prescription for me ?” . A good doctor doesn’t just sign . They check your history , your symptoms , your allergies . They have a mental model of how the body works , and they run your request through it before putting their name on anything .

That mental model isn’t something they invented for you . It’s how they were trained . It’s how they train new doctors . It’s how they talk to specialists . It’s how they navigate through ambiguity .

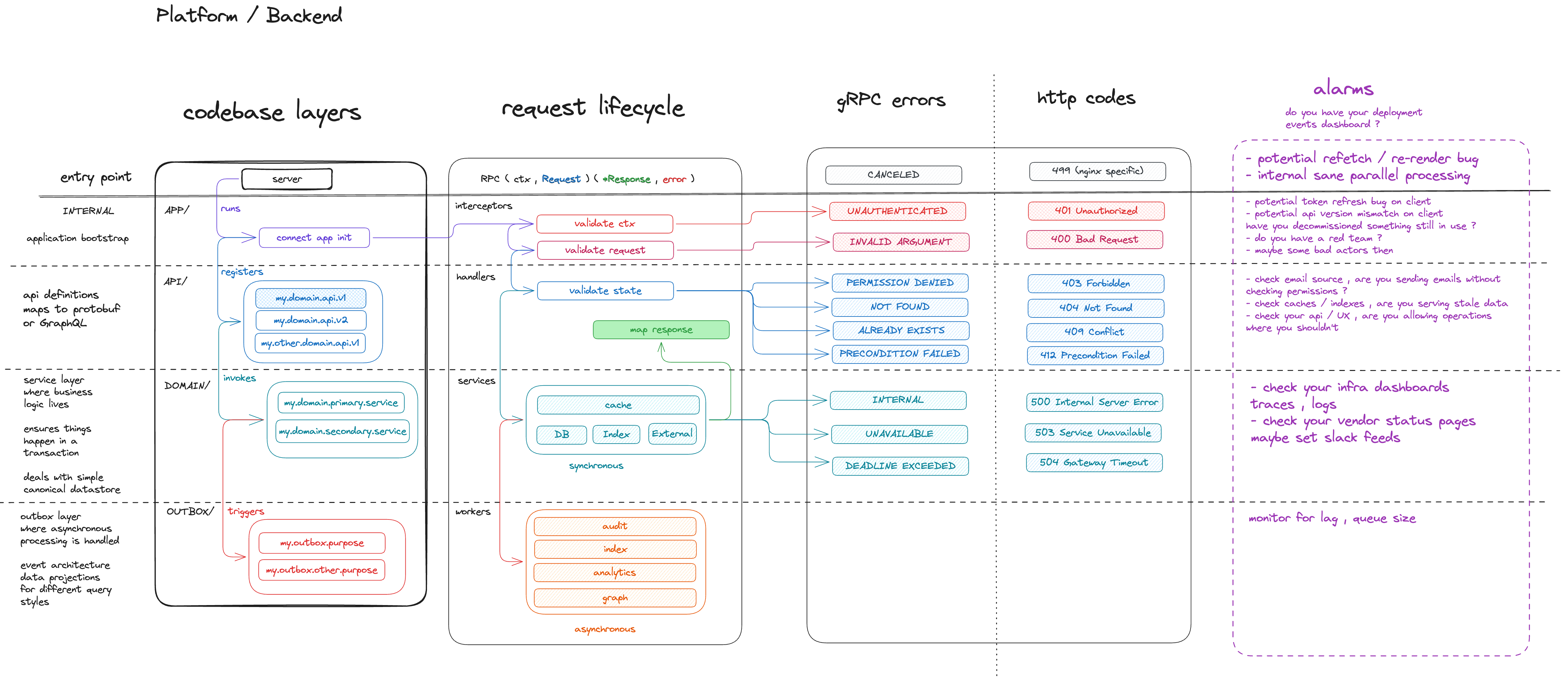

I have one of those for engineering organisations as a whole , but today , I’ll cover just a slice of it : backend systems . I’ve used this one for years to onboard engineers (and myself) , talk through system design , and give people a map as they go loose in a codebase .

They say a picture speaks a thousand words … well , I stopped saying I’m terrible at drawing and picked up the brush to speak my mind .

The model doesn’t just help you see what’s there . It helps you spot what’s missing and notice what needs a closer look . The empty quadrant , the layer nobody thought about . MCPs I hear are the new API layer … well what comes next ?

Now with AI agents , it turns out this particular model works beautifully for them too . Whether your tech stack is in Go , Java , TypeScript , Rust … the same concepts apply , you can curate and orchestrate your development agents , your review agents , and your incident management agents too .

You can safely operate closer to those compliance constraints if you happen to be in a highly regulated industry (aka. take accountability for your AI)

The four lenses

Four lenses , each split into layers which guides you through key questions .

- Codebase layers , where does the code live ? Every backend has a shape , and if you can’t name the layers , you’re navigating blind .

- Request lifecycle , what happens when a request comes in ? This one breaks down into interceptors , handlers , services , workers … each with a clear role , and no , they’re not interchangeable .

- Error handling , what are the common error cases for each layer ? You can then observe for anomalies .

- Alarms , when something breaks , how do we triage it ? Also per layer , because the runbook for a failing interceptor looks nothing like the runbook for a failing worker … they also imply a different impact/severity

Put them together and you stop guessing .

Codebase layers

Four layers :

- APP/ , entry point . Bootstrap , wiring , configuration , graceful shutdown .

- API/ , the contract . Protobuf , GraphQL , REST . It’s what the outside world touches . Registers handlers , defines the shape .

- DOMAIN/ , business logic . Transactions , canonical data structures , core operations . What you strive to keep simple .

- OUTBOX/ , async processing . Event projections , data fan-out . Triggered outside the request path . What you must keep consistent and abstract away into a “platform”

When I ask an agent to implement something , I tell it which layer . That alone kills half the architectural drift .

It allows for small targeted pull requests that I can retrospectively audit (aka, AI compliance) as opposed to continuously review (aka, AI Trust).

Request lifecycle

A request flows through a clear pipeline :

- Interceptors , validate context and request shape . Are you who you say you are ? Is this a valid request ?

- Handlers , validate state , map the response . Does this resource exist ? Is this operation allowed right now ?

- Services , synchronous work . Database , cache , external calls .

- Workers , async work after the response . Audit , indexing , analytics .

Agent puts auth checks in a handler ? Wrong , that’s an interceptor . Indexing in the request path ? Move it to a worker . The lifecycle is the checklist .

Error handling

For each common implementation , you map out the error cases upfront . What can go wrong at this layer , and what should the caller hear ?

- Interceptors :

UNAUTHENTICATED,INVALID_ARGUMENT, mapping to 401 , 400 . - Handlers :

PERMISSION_DENIED,NOT_FOUND,ALREADY_EXISTS,PRECONDITION_FAILED, mapping to 403 , 404 , 409 , 412 . - Services :

INTERNAL,UNAVAILABLE,DEADLINE_EXCEEDED, mapping to 500 , 503 , 504 .

A handler returning INTERNAL for a missing resource ? Bug . An interceptor returning NOT_FOUND ? Design smell . The layer tells you where the error belongs .

Alarms

Alarms without runbooks are just noise . Each layer gets its own triage path :

- Interceptors firing ? Token refresh bugs , API version mismatches , refetch loops . Is load shedding working ?

- Handlers firing ? Stale caches , bad indexes , operations that shouldn’t be allowed .

- Services firing ? Infra dashboards , traces , logs , vendor status pages .

- Workers firing ? Lag , queue size . Async failures are the silent killers . Nobody notices until the backlog is enormous .

When I set up a feature , I ask : what breaks , and what does the runbook look like ?

Why this matters for agentic workflows

AI agents are fast . But speed without structure produces code you can’t reason about .

This model gives me three things :

-

A shared language . I don’t say “build me an API” . I say “add a handler in API/ that validates state , returns ALREADY_EXISTS if the resource is there , and triggers an outbox event for indexing” .

-

A review checklist . Right layer ? Right lifecycle stage ? Right error semantics ? Right observability ? Same questions I’d ask a junior engineer . Same questions I now ask the agent .

-

Portability . The model doesn’t care about your stack . The lenses and layers are the same everywhere . New codebase , new language , same whiteboard .

And you don’t have to do every review yourself . The same model that makes you a better reviewer makes a great briefing for a review agent . Give it the four lenses . Tell it what layer the change targets , what errors are acceptable , what observability you expect . One agent writes , another agent reviews , and the mental model is the contract between them .

Vibe coding is fine for prototypes . But if you’re shipping to production , you need a mental model that keeps you and your agents productive .